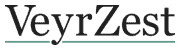

In late January 2026, The Lancet published the final results of the Mammography Screening with Artificial Intelligence trial, known as MASAI. Over 105,000 women in southwest Sweden, aged forty to eighty, were randomised between April 2021 and December 2022 to one of two screening pathways: AI-supported screen reading, in which a commercial AI system triaged examinations and provided detection support, or standard double reading by two radiologists without AI. The primary outcome was the rate of interval cancer — cancer diagnosed between screening rounds, within two years of a negative screening — which is the accepted endpoint for the effectiveness of a screening programme because it captures the cancers a programme missed. Interval cancer was 1.55 per thousand women in the AI-supported arm, compared with 1.76 per thousand in the standard arm. The reduction was twelve per cent, non-inferior against the pre-specified margin; the subgroup of aggressive, non-luminal-A cancers was reduced by twenty-seven per cent. Sensitivity rose by 6.7 percentage points with no change in specificity. Screen-reading workload fell by forty-four per cent.

This is the first randomised controlled trial of AI in cancer screening, and it is, by a substantial margin, the largest randomised trial of any medical AI system in any clinical setting. Its result is positive. It is also narrow, qualified, and — when read carefully — a clearer statement about what medical AI currently looks like when it is evaluated properly than any preceding study the field had available.

WHAT THE TRIAL ACTUALLY DID

The MASAI trial was designed as a non-inferiority study: the primary question was whether AI-supported screening produced an interval-cancer rate that was no worse than standard double reading. Non-inferiority, in trial design, is not a weak test. It is the correct test when the objective of an intervention is to preserve existing quality while changing something else — in this case, while reducing radiologist workload and improving detection sensitivity at the same specificity. The pre-specified non-inferiority margin was chosen such that any meaningful increase in missed cancers would have flagged the AI pathway as unsafe. The result was not only non-inferiority; the interval-cancer rate was lower in the AI arm, and the reduction in aggressive cancers was clinically meaningful.

The AI system used — Transpara, made by ScreenPoint Medical — was deployed in two roles. It triaged examinations into single-reading or double-reading queues based on a risk score, allowing lower-risk examinations to be read by a single radiologist and freeing capacity for higher-risk work. It also provided detection-support marks visible to the radiologists reading the images. The forty-four per cent reduction in workload came from the triage function; the detection improvement came from the combination of both.

Follow-up was two years — the standard window for interval-cancer assessment. Enrolment closed at the end of 2022; the primary-endpoint analysis was reported in January 2026 on 105,934 enrolled women. The safety analysis, published in Lancet Oncology in 2023 on the first 80,000, had already shown the trial was safe to continue. The screening-performance analysis, published in Lancet Digital Health in March 2025, had shown a twenty-nine per cent increase in cancer detection with no increase in false positives. The January 2026 paper closed the loop: detection up, false positives flat, workload down, interval cancers lower, aggressive interval cancers much lower.

WHAT THE RESULT MEANS

The cleanest statement of what the trial shows is this: in a population screening programme in a high-resource country, with experienced radiologists, a specific AI system, and a specific screening protocol — deploying the AI both as a triage tool and a detection aid produced a measurable reduction in the interval cancers that a screening programme is designed to prevent, while substantially reducing the radiologist workload required to produce that outcome. Each of those qualifications is doing work.

The scientific contribution is twofold. First, MASAI has converted what was a body of retrospective, observational, and simulation evidence into prospective, randomised evidence on a hard clinical endpoint. The retrospective work had been suggestive; simulation had been promising; paired-reader studies had shown diagnostic accuracy. None of these designs can rule out the confounders that plague real-world AI deployment. A randomised trial with interval-cancer outcomes can. Second, it has shown that the benefit is not just in the AI system but in the workflow design around it — the specific combination of triage plus detection support, with the human radiologist retained in every positive pathway, is what produced the result.

The cleanest statement of what the trial shows is this: a specific AI system, in a specific workflow, in a specific country, produced a measurable improvement in a specific screening endpoint. Each of those qualifications is doing work.

WHAT THE RESULT DOES NOT MEAN

The result does not establish that AI replaces radiologists. MASAI was not designed to test that. In both arms of the trial, a human radiologist read every positive pathway, including those flagged by AI. The first author of the final paper, Jessie Gommers, stated the point directly: the result ‘does not support replacing healthcare professionals with AI as the AI-supported mammography screening still requires at least one human radiologist to perform the screen reading, but with support from AI’. The trial’s contribution to radiologist workload — a forty-four per cent reduction — is large. It is not a displacement. It is a redistribution.

The result does not establish that AI will perform similarly elsewhere. MASAI was conducted in Sweden, a country with an unusually robust national screening programme, experienced radiologists in every arm, one specific AI system, one specific imaging vendor’s equipment, and a population that consented at high rates. The authors of the trial are explicit about these constraints. The result generalises to similar settings. It generalises less cleanly to less-resourced settings, to populations with lower screening attendance, to equipment other than what was tested, and to AI systems other than Transpara.

The result does not establish that AI in medicine will generalise across clinical questions. Mammography screening is, in a specific sense, an unusually favourable setting for AI. The task is bounded, the input modality is standardised, the ground truth is obtainable via biopsy and follow-up, and the deployment infrastructure — PACS systems, radiology reading queues — is already digital. Few other clinical questions share all four properties. The MASAI result is strong evidence for AI in mammography. It is weaker evidence, and in some cases almost no evidence, for AI in clinical questions whose structure is different.

WHAT THE DEPLOYMENT PATTERN ALREADY SHOWS

Around the MASAI result, a broader deployment pattern is now visible. A retrospective German study of nationwide real-world mammography AI deployment, published in Nature Medicine in 2025, reported similar effects at population scale. A prospective Korean study (AI-STREAM) has returned preliminary findings consistent with the Swedish and German evidence. A Danish implementation study published in Radiology in 2024 documented the early real-world experience. These are not independent replications of MASAI; they are variants of the same class of study. But they sit coherently with the randomised result: across several high-resource settings, AI support appears to produce small improvements in cancer detection, meaningful reductions in radiologist workload, and no increase in false positives.

Three features of the resulting picture are worth stating precisely. First, the evidence is narrow in domain. Mammography screening is the clinical question that has been evaluated. Several adjacent questions — diabetic retinopathy screening, specific dermatology tasks, some narrow pathology tasks — have accumulated similar evidence; most of clinical medicine has not. Second, the evidence is about a particular deployment pattern: AI as a support tool inside a workflow where human clinicians are retained in every decision pathway. The alternative deployment pattern — AI as autonomous decision-maker — has not been trialled at this scale on a hard clinical endpoint in any developed-country setting. Third, the clinical effect sizes are real but modest. The twelve per cent reduction in interval cancers is meaningful for the women for whom it is the difference; it is not transformational at population level, and it does not change the overall mortality arithmetic of breast cancer in any dramatic way.

WHAT MASAI TELLS US ABOUT THE WIDER STORY

The wider implication, for readers trying to make sense of medical AI outside the narrow domain where MASAI applies, is methodological. The MASAI trial is the version of the medical AI story that survives the transition from promise to evidence. It is smaller than the promise. It is much larger than nothing. It is domain-specific, vendor-specific, workflow-specific, and supported by roughly five years of accumulated prospective work. It was not obtained quickly, cheaply, or cleanly. It required a randomised trial of the scale and duration of a drug trial — because the question being asked, properly stated, is the same kind of question.

This is the lesson the rest of medical AI will either learn or suffer for not learning. The evidence base that will actually justify clinical deployment is prospective, comparative, and measured against endpoints the patient cares about. Where that evidence exists, the technology earns deployment. Where it does not, marketing claims and retrospective accuracy numbers are doing the work of evidence. The MASAI result is encouraging for the field not primarily because of its specific number but because of the standard it sets. The field has now shown, once, that AI in medicine can produce a prospective, randomised improvement on a hard outcome under realistic conditions. The question for the next five years is how many times that demonstration can be repeated, in how many settings, on how many clinical questions. The answer to that question will be the real deployment story, not the press releases that preceded it.

PRIMARY SOURCES

— Gommers J, Hernström V, Sartor H, et al. Interval cancer, sensitivity, and specificity comparing AI-supported mammography screening with standard double reading without AI in the MASAI study: a randomised, controlled, non-inferiority, single-blinded, population-based, screening-accuracy trial. The Lancet. 2026 Jan 31. https://www.thelancet.com/journals/lancet/article/PIIS0140-6736(25)02464-X/abstract

— Lång K, Josefsson V, Larsson A-M, et al. Artificial intelligence-supported screen reading versus standard double reading in the Mammography Screening with Artificial Intelligence trial (MASAI): a clinical safety analysis. Lancet Oncology. 2023 Aug;24(8):936–944. https://pubmed.ncbi.nlm.nih.gov/37541274/

— Hernström V, Josefsson V, Sartor H, et al. Screening performance and characteristics of breast cancer detected in the MASAI trial. The Lancet Digital Health. 2025 Mar;7(3):e175–e183. https://pubmed.ncbi.nlm.nih.gov/39904652/

— Eisemann N, Bunk S, Mukama T, et al. Nationwide real-world implementation of AI for cancer detection in population-based mammography screening. Nature Medicine. 2025;31:917–924. https://pubmed.ncbi.nlm.nih.gov/39805916/