The Federal Trade Commission opened an inquiry into Publishing.com in April, examining a coaching operation that allegedly trained customers to flood Amazon’s Kindle Direct Publishing platform with AI-generated books. The complaints describe a familiar arc. Customers were told to borrow against credit to enroll. They were told that publishing dozens of titles was a path to durable income. They were told that AI tools made authorship a matter of throughput rather than craft. The investigation is one signal. The Amazon policy that limits Kindle authors to three new titles per day is another. The disclosure mandate that requires AI-generated books to be flagged is a third.

These are the visible edges of a quieter structural shift. The cost of producing a book that is grammatically intact, narratively coherent, and topically plausible has fallen near to zero. That is the change. Everything else — the disclosure debates, the platform policies, the FTC complaints — is downstream from that single fact.

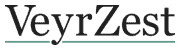

The question worth holding is what survives when production cost decouples from the labor of authorship. Publishing as an institution has weathered three previous compressions of cost. Movable type made books portable and cheap; the paperback put novels within reach of anyone with a few coins; the internet stripped distribution costs to bandwidth. In each case literary culture absorbed the shock and continued — sometimes reshaped, sometimes diminished, always recognizable. The current compression is different in one respect. The earlier shifts reduced the cost of moving or reproducing words. They did not reduce the cost of writing them.

Generative models do reduce that cost, and the consequence is not that AI books are individually bad — many are, but quality is not the structural problem. The structural problem is that the volume of plausible text now exceeds any reader’s capacity to filter. What that does to a literary culture is recalibrate value. Curation rises. Authorship-as-asset falls. The middle layer — midlist authors whose royalties make a tenuous living and whose books seed the next generation of readers — is the layer most exposed.

What endures, then, is what was already most durable in the system. Works whose authority is institutional — university presses, prize-vetted houses, archived journals — gain relative weight, because their imprint is itself a filter. Works whose authority is generational — books that have already accumulated decades of citation — gain relative weight for the same reason. Works whose authority was earned slowly, through a single author’s accumulated body of disciplined argument, gain relative weight too, because no machine can yet produce a coherent decade of work.

What erodes is the speculative, the midlist, the discoverable. The author who would have been read in 1995 because a bookstore stocked their cover face-out is structurally disadvantaged when the cover is a thumbnail in an algorithmic feed alongside ten thousand machine-generated competitors. The forward pipeline of literary culture — the place where minor authors become major over fifteen years — runs through that midlist and that discoverability. If that pipeline narrows, the literary commons will narrow with it.

This is not a prediction about the death of reading. The decline-of-reading discourse, catalogued in National Endowment for the Arts data and replicated in U.K. and Australian surveys, was visible long before the volume shock arrived. People are reading less for pleasure, and the cause is mostly screens, not AI books. The volume question is different. It is about what readers are reading when they read, and what kind of authorial ecosystem can be sustained when production economics cease to discipline supply.

The Publishing.com case is a useful instrument because it makes the underlying logic legible. The pitch was honest in its way: throughput is the path. Borrow money, enroll in the program, output dozens of books. The Federal Trade Commission has a different reading. So do the platforms, which have begun, with the slowness characteristic of platforms, to act on volume directly. Amazon’s three-titles-a-day cap is not a cultural intervention. It is a quality-control measure aimed at protecting the margins of the platform’s $28-billion book business. If that business were not threatened, the cap would not exist.

What the cultural institutions of literary life can do is harder to articulate. The honest position is that authorship has always been a kind of slow trust. A name on a cover is a wager that the labor behind the cover was real. The wager fails when the labor disappears. Returning to durable forms of authorial trust — institutional vetting, longitudinal record, the patient accumulation of a body of work — is not a defense of nostalgia. It is recognition that the production-cost compression has changed what the markers of trust must do.

The institutions that survive will be those whose imprimatur is harder to fake than text. The authors who survive will be those whose work cannot be produced by sampling its surface. What the rest of literary culture does in response is being decided now, in publisher meetings and platform policy memos, mostly without public language. The evidence is in the policies. They are not optimistic policies.