In February 2026, the International Energy Agency published Electricity 2026, its annual electricity outlook, expanded this year to a five-year horizon. Buried in the demand chapters is the figure that ought to anchor most current AI conversations and does not. United States electricity demand is forecast to grow by roughly two percent annually through 2030 — more than double the pace of the previous decade. Data centres are expected to account for almost half of that growth. The same week, the IEA reported that data-centre electricity demand surged seventeen percent in 2025, well above the three-percent growth in global electricity demand overall. AI-focused data-centre demand is poised to triple by 2030.

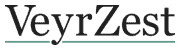

These numbers reframe the AI-investment story. The capital expenditure of the five largest technology firms exceeded $400 billion in 2025 and is forecast to grow by a further seventy-five percent in 2026. Most of that capital does not buy compute directly. It buys the buildings, cooling, generators, transformers, substations, and grid-connection rights that the compute requires. The bottleneck binding the system is not chip supply, model architecture, or research talent. It is electricity, transmitted through a grid that was not designed for what is being asked of it.

The grid bottleneck is the part of the story that has gone least examined. Interconnection queues — the lists of generation and load projects waiting for a utility to connect them — have grown sharply. The IEA’s report finds that, on US utility data, only a small fraction of projects in these queues correspond to firm, financed builds. The rest are speculative — developers staking position against the possibility of construction, knowing that connection rights are scarce and queue ordering matters. Utilities and grid operators have to plan against demand forecasts that are, by their own admission, partially fictional. They are then routinely accused of either over-building or under-building against those forecasts.

The local consequences are visible already. A March 2026 Consumer Reports analysis found that residential electricity rates in communities near major data-centre clusters in Virginia, Texas, and Georgia have risen by eight to fifteen percent in eighteen months, with the increases attributable in significant part to grid investments required to serve hyperscaler load. The political mechanics of this are unstable. Public utility commissions are being asked to approve rate increases on residential customers to fund infrastructure whose primary beneficiary is a small number of well-capitalised technology firms. The arrangement will not hold indefinitely without legislative attention.

The supply response has taken a particular form. Constrained by slow grid connections, hyperscalers and their developer partners are increasingly pursuing onsite natural gas generation — building their own power plants adjacent to data centres rather than waiting for utility connections. The IEA notes this is largely a US phenomenon. It is also a regulatory question that the climate framework of the previous decade did not anticipate: large-scale fossil-fuel buildout, financed by firms that have publicly committed to net-zero, justified on the basis of a service-delivery deadline. Whatever else this is, it is not the energy story that the AI sector’s communications have told.

There is a serious counterargument, and it should be addressed. Power consumption per AI task is falling. Efficiency improvements at the chip level (newer GPU architectures, higher floating-point efficiency), at the model level (smaller, faster models for inference), and at the data-centre level (better cooling, higher rack density) have together driven median energy per text query down to roughly 0.24 to 0.3 watt-hours, from estimates near 2.9 watt-hours two years ago. The Brookings analysis notes that this is among the fastest efficiency improvements in the history of any energy-using technology.

That counterargument fails on a Jevons reading. Per-task efficiency is improving. Total tasks are growing faster than efficiency is improving. The result is that aggregate AI electricity consumption has risen, is rising, and is forecast to keep rising on the IEA’s central scenario. The trajectory of agentic AI — long-horizon multi-step reasoning, persistent background tasks — is more energy-intensive per user-session than chat. Efficiency gains do not, in this case, produce energy savings. They produce more usage.

There is also a regional concentration story that compounds the aggregate. A recent peer-reviewed analysis finds that more than ninety percent of projected AI compute capacity is sited in three regions: North America, Western Europe, and the Asia-Pacific. Within those regions, particular nodes — Northern Virginia, Ireland, Oregon — show local power-system stress indexes well above the level at which planners flag grid vulnerability.

The argument worth holding is that the AI capability story and the AI electricity story have been told as separate conversations, and they are not. They are the same conversation. Whether the next twenty-four months produce the productivity inflection that frontier-lab forecasts imply will be settled, in significant part, by whether the grid can be made to deliver the load. Onsite gas generation is the expedient response. The strategic responses — long-duration storage, transmission expansion, nuclear restart — have lead times measured in years, not quarters.

What this reveals is that an industry that has talked about itself as software has bought itself a heavy-industry constraint. The constraint is real, the timelines for relieving it are long, and the political economy of who pays for the relief is unsettled. Anyone making decisions on the assumption that AI capability will be delivered at the rate the lab roadmaps imply should have those features on the dashboard. The grid is the question.